We are excited to announce the availability of the developer preview for WebNN, a web standard for cross-platform and hardware-accelerated neural network inference in the browser, using DirectML and ONNX Runtime Web. This preview enables web developers to leverage the power and performance of DirectML across GPUs with support coming soon for Intel’s® Core™ Ultra processors with Intel® AI Boost and the Copilot+ PC, powered by Qualcomm® Hexagon™ NPUs.

WebNN is a game-changer for web development. It’s an emerging web standard that defines how to run machine learning models in the browser, using the hardware acceleration of your local device’s GPU or NPU. This way, you can enjoy web applications that use machine learning without any extra software or plugins, and without compromising your privacy or security. WebNN opens up new possibilities for web applications, such as generative AI, object recognition, natural language processing, and more.

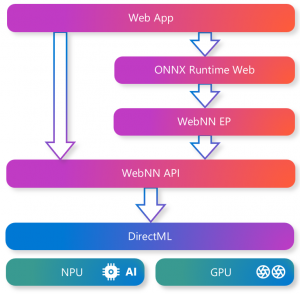

WebNN is a web standard that defines how to interface with different backends for hardware accelerated ML inference. One of the backends that WebNN can use is DirectML, which provides performant, cross-hardware ML acceleration across Windows devices. By leveraging DirectML, WebNN can benefit from the hardware scale, performance, and reliability of DirectML.

With WebNN, you can unleash the power of ML models in your web app. It offers you the core elements of ML, such as tensors, operators, and graphs. You can also combine it with ONNX Runtime Web, a JavaScript library that enables you to run ONNX models in the browser. ONNX Runtime Web includes a WebNN Execution Provider that simplifies your use of WebNN.

To learn more or to see this in action, be sure to check out our various Build sessions. See below for details.

See here to learn what our hardware vendor partners have to say:

- AMD: AMD is excited about the launch of WebNN with DirectML enabling local execution of generative AI machine learning models on AMD hardware. Learn more about where else AMD is investing with DirectML.

- Intel: Intel looks forward to the new possibilities WebNN and DirectML bring to web developers – learn more here about our investments in WebNN. Please download the latest driver for best performance.

- NVIDIA: NVIDIA is excited to see DirectML powering WebNN to bring even more ways for web apps to leverage hardware acceleration on RTX GPUs. Check out all the NVIDIA related Microsoft Build announcements around RTX AI PCs and their expanded collaboration with Microsoft.

Getting Started with the WebNN Developer Preview

With the WebNN Developer Preview, powered by DirectML and ONNX Runtime Web, you can run ONNX models in the browser with hardware acceleration and minimal code changes.

To get started with WebNN on DirectX 12 compatible GPUs you will need:

- Window 11, version 21H2 or newer

- ONNX Runtime Web minimum version 1.18

- Microsoft Edge Canary channel, with the WebNN flag enabled in about:flags

For more instructions and information about supported models and operators, please visit our documentation. To try out samples, please visit the WebNN Developer Preview page.

Learn more

Be sure to check out these sessions at Microsoft’s Build Conference to learn more about WebNN:

- BRK240: Bring AI experiences to all your Windows Devices

- StudioFP126: The Web is AI Ready—maximize your AI web development with WebNN

Additional WebNN documentation and samples:

Source: Windows Blog

—