Just over one year ago, we started working on a best practices tool for the web called sonarwhal—a customizable, open-source linting tool, built for modern web developer workflows. Today, we are announcing the release of its first major version. With today’s launch, we’d like to talk you through a look back at how sonarwhal started, and the journey to v1 over the past few months.

It all started with feedback from web developers, partners, and from our own experiences building for the web. The web platform is becoming richer at a faster pace than ever before: we now have web experiences we couldn’t even imagine a few years back. Sites can work offline, push notifications (even when the user is not visiting the site), and even run code at native speeds with WebAssembly. On top of keeping up to date, developers also need to know about many other things like accessibility, security, performance, bundling, transpiling, etc.

Every day, we see sites that have a great architecture, built with the latest libraries and tools, but that don’t use the right cache policy for their static assets, or that don’t compress everything they should, or with common security flaws—and that’s just scratching the surface. The web is complex and it can be easy to miss something at any point during the development process.

Being a web developer can be overwhelming

What could we do to help web developers make their sites faster, more secure and accessible while at the same time making sure they remain interoperable cross browser? And that’s how all started. Picking the name was the easiest part: the team loves narwhals and they have one of the best echolocation beams in nature (or sonars) so it was an obvious choice.

We knew from the beginning that we wanted sonarwhal to be built by and for the web community. We didn’t want to indoctrinate our personal opinions into sonarwhal’s rules. We wanted sonarwhal to be backed by deep research, remain neutral, and allow contributions from any individual or company. Thus, we decided to make sonarwhal an open source project as part of the JS Foundation.

Since then, we’ve kept ourselves busy listening to your feedback and implementing many of the goals we had back then, while adding some new ones.

When creating a new rule, we follow the 80/20 principle:

80% of the time is research and 20% is coding

If there’s one thing we are most proud of, it’s the extensive research we do on each subject and how deep the rules go to check that everything is as it should be.

Just to give you some examples:

- The

http-compressionrule will perform several requests for each resource with different headers and check the content to validate the server is actually respecting them. E.g.: When resources are requested uncompressed, does the server actually respect what was requested and serve them uncompressed? Is the server doing User-Agent sniffing instead of relying on the Accept-Encoding header of the request? Is the server compressing resources using Zopfli when requests are made advertising support for gzip compression? - The web manifest rules are also interesting. Does the web manifest point to an image? Does that image exist? Does the image meet the recommended resolution and file size? Does it have the right format to be used by any browser? Is the name of the web application short enough to be displayed on all platforms?

- The web is full of lies (starting with the user-agent string). Just because a file ends with

.pngand hascontent-type: image/pngdoesn’t mean it’s a PNG. It could very well be a JPEG file, or something completely different. And the same goes for every downloaded resource. Thecontent-typerule will look at the bytes of the resources and verify. the server is actually serving what it says it is, and where applicable, that is specifying the propercharset.

And the list goes on…

More than 30 rules in 6 categories (and counting!)

sonarwhal validates many different things: from accessibility and content types, to verifying your JavaScript libraries don’t have any known vulnerabilities and that you are using SRI to validate that no one has tampered with the code.

Some of the issues require developers to change their code, but others require tweaking to the server configurations. Changing the configurations might not be obvious, especially when targeting only certain resource types, or newer tools and techniques such as Brotli compression, which may not be as thoroughly documented. To make the developer experience easier, we’ve also added examples for Apache and IIS for the rules that require it.

Get started testing with our online scanner

sonarwhal runs on top of Node.js and is distributed via npm. But what happens if you want to check a site using your mobile phone? Or maybe your IT administrator doesn’t allow you to install any tool.

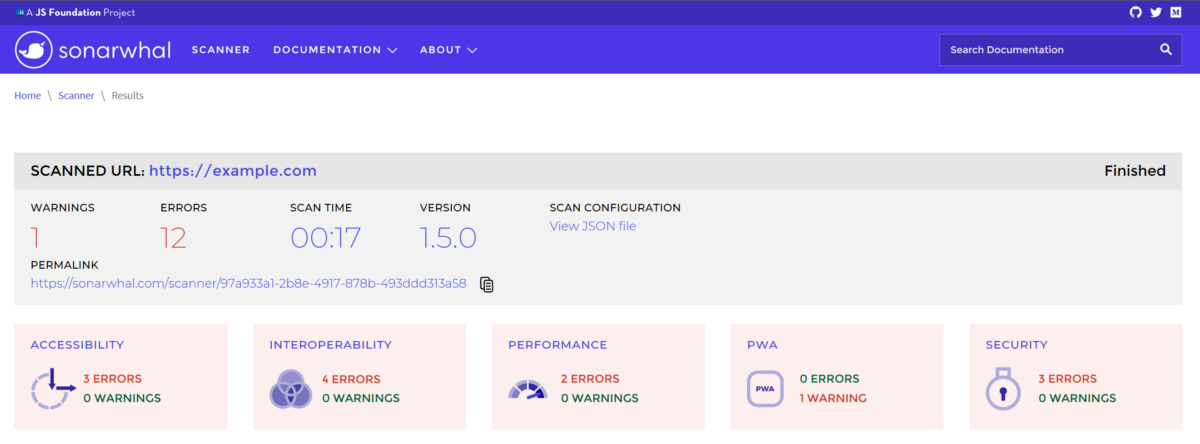

We needed a way to scale and make sonarwhal available anywhere with an internet connection.In November of last year we launched the online version. Since then, more than 160,000 URLs have been analyzed.

Each result page has its own permalink to allow you to go back to it later, or share it with anyone.

The code for the online scanner is available on GitHub.

Scan results for https://example.com

Configure sonarwhal to your needs

We think tools should be helpful and should stay out of your way. We can tell you what we think is important, but at the end of the day, you are the one that best understands what you are building and what requirements you have.

We build sonarwhal with strong defaults, but with the flexibility to let you decide what rules are relevant to your project, what URLs should be ignored, what browser you want to use—essentially, we want everything to be configurable.

To make it easier to reuse configurations, you can now extend from one or more and tweak the properties you want.

For example, to use the configuration “web-recommended” you just have to:

npm install @sonarwhal/configuration-web-recommended

And tell your .sonarwhalrc file to extend from it:

{

"extends": ["web-recommended"]

}

If you want to tweak it, you can do this:

{

"extends": ["web-recommended"],

"ignoredUrls": [{

"domain": ".*.domain1.com/.*",

"rules": ["*"]

}],

"rules": {

"highest-available-document-mode: ["error", {

"requireMetaTag": true

}]

}

}

The above snippet will use the defaults of “web-recommended”, ignore all resources that match the regular expression .*.domain1.com/.* and enforce the X-UA-Compatible meta tag instead of the header.

Without doubt, one of our favorite features is the adaptability of the rules. Depending on the browsers you want to support, some rules will adapt their feedback telling you the best approach for your specific case. We believe this is really important because not everybody gets to develop for the latest browser versions.

Easily extend sonarwhal with parsers to analyze files such as config files

Catching issues early in the development cycle is usually better than when the project is already shipped. To help with this we created the “local connector” that allows you to validate the files you are working with.

Building a website these days usually requires more than just writing HTML, JavaScript and CSS files. Developers use task managers, bundlers, transpilers, and compilers to generate their code. And each one of these needs to be configured, which in some cases is not easy. To tackle this problem, we came up with the concept of a parser. A parser understands a resource format and is capable of emitting information about it so rules can use it.

Parsers are a powerful concept. They allow sonarwhal to be expanded to support new scenarios we couldn’t imagine when we first started the project. In our ca se, we’ve started creating parsers for config files of the most popular tools used during the build process ( tsconfig.json, .babelrc, and webpack.config.js so far) and rules related to them:

- By default TypeScript output is ES3 compatible, but maybe you don’t need to go all the way down and could be using ES5, ES6, etc. Using the information of your

browserslist, we’ll tell you what target you should have in yourtsconfig.jsonfile. - If you are using webpack, you should have

"modules": falsein your.babelrcfile to make sure you have better tree shaking and thus, less generated code.

These are just some relatively basic examples of what’s possible. Parsers allow you to create rules for virtually anything. For example, someone could create a parser that understands the metadata of image files and then a rule that checks that all the images have a valid “copyright status.”

sonarwhal analyzing configuration files for webpack and TypeScript

sonarwhal v1 is now available! Go get it while it’s fresh!

As you can see, we’ve been busy! After all that, we’re finally ready to announce the first major version of sonarwhal!

While this is a big milestone for us, it doesn’t mean we are going to remain idle. Indeed, now that GitHub organization projects can be public we’ve opened up ours so you can know the project’s priorities and what we are working on.

Some of the things we are more excited about are:

- New rules: you can expect more rules around security, performance, PWA, and development very soon.

- User actions for the browser: sometimes the page or scenario you need to test requires a bit of interaction to get to it. We are looking in ways to allow you to control the browser before a scan.

- Custom configuration for the online scanner: the user should be capable of deciding everything, even when using the online scanner.

- Notifications for the online scanner: some websites take longer than others to scan and is easy to forget you have something running on a background tab. We will add opt-in notifications to let you know when all the results are gathered.

sonarwhal is completely open source and community driven. Your feedback is really appreciated and helps us prioritize this list. And if you want to help developers all around the world, join us!

– Antón Molleda, Senior Program Manager, Microsoft Edge

Source: Windows Blog

—